VicPD spent $24,000 so officers can ask an AI how to do their job

Tucked away in its $90 million budget document, VicPD announced it had “developed an internally hosted, closed AI system for in-house training and specialized uses.” It provided no details about what the system did or what it cost, and Victoria councillors asked no questions.

Internal VicPD documents, obtained through an FOI request, show that VicPD has spent $24,000 to run their own equivalent of ChatGPT, which officers can use to input sensitive police data and ask questions about policing and the law.

Launching VicPD’s “internal AI”

In August 2025, Dan Phillips, VicPD’s Director of Information Risk Management, emailed all VicPD staff to launch an “internal AI tool” for VicPD. He said it had “the benefits and features of other AI models, but without the risk of leaking confidential, sensitive or private data.”

Screenshot of August 18, 2025 email launching VicPD’s internal AI.

Receipts show VicPD paid $23,801 for four Mac Studio computers to run the system. Phillips said VicPD employees “can use the tool any way you would use a commercial AI tool, such as ChatGPT or CoPilot.” Staff could “ask it questions,” and it would also “assist with writing documents, analyse data, [and] perform research.”

VicPD had linked its AI tool to VicPD’s policies and procedures, with plans to add the “Canadian Criminal Code, local bylaws and case law data sources.”

VicPD created a guide on “AI Prompts” for staff, which suggests they ask AI software to “Explain [complex topic] like I’m 10 years old.” Instead of officers being subject matter experts, or at least consulting VicPD policy on things like how to respond to intimate partner violence, officers can instead ask a computer program what it thinks VicPD’s policy is. And VicPD’s plan to add the Criminal Code means that officers may already be asking it to interpret the law, too.

One example of a prompt VicPD says “will get the best results.” From VicPD’s guidance document on “AI Prompts.”

People can live or die as a result of officers’ actions. A VicPD officer could act based on an incorrect AI summary of a policy, or a false interpretation of a law. Or an officer might feed police reports and intelligence on someone into the AI, which could advise the officer to prepare to use force, putting the person in danger. VicPD might argue that officers can save time by not doing their own work, but it’s a tradeoff with potentially devastating consequences.

VicPD’s use of AI could also limit police accountability. If officers stop writing their own reports, we can expect some officers will use that fact to later claim their reports don’t accurately reflect their actions. There also may be a limited record of when officers used AI to inform a decision, as VicPD says “the system does not create logs or retain records generated by the system.”

In addition to giving officers the option to cede police report writing, research, data analysis, and interpreting the Criminal Code to a computer program, VicPD says other ways it could “leverage AI technologies in the near future” include translation and transcription, creating training videos, and conducting “threat and vulnerability scanning.”

In January 2026, B.C.’s Office of the Information and Privacy Commissioner wrote that “Commonly reported AI errors [in transcription], including hallucinations, omissions, and misspellings, could … have catastrophic consequences in healthcare settings.” The same is surely true for policing, where an incorrect police transcript or translation could be used against someone, with immediate consequences for that person before the truth is known.

There could have been a debate about whether any of this is a public good. Instead, VicPD spent the money and announced they’d already started using the system without any public input or comment from elected officials.

Safety for whom?

VicPD’s greatest AI fear seems to be that confidential information might leak out. VicPD’s first internal Q&A about its AI system asks “Is it safe to use?” The answer VicPD gives is “YES,” solely for the reason that it’s “not connected to the internet.”

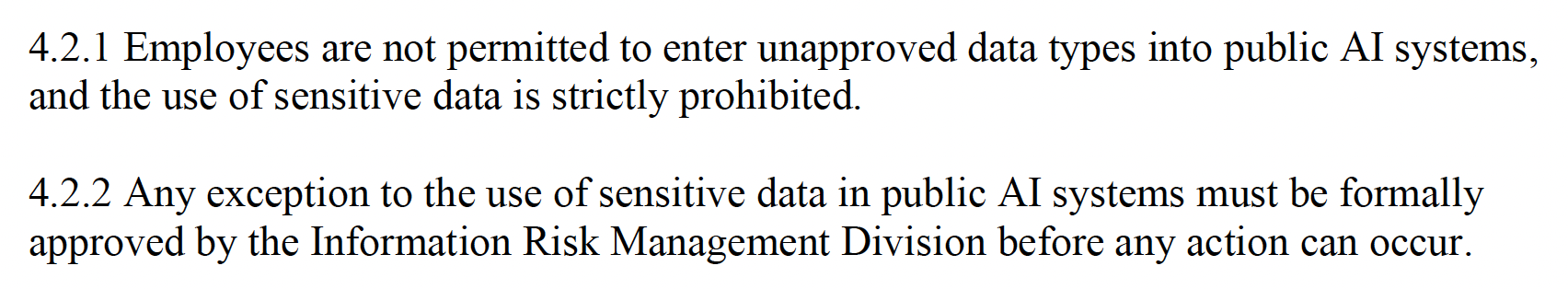

There are bigger safety risks than a data breach, but VicPD even trips over that low bar. VicPD’s official AI security policy says “the use of sensitive data is strictly prohibited” in an online, public-facing AI system, like ChatGPT. But they immediately contradict themselves with a secondary policy that says VicPD employees can input “sensitive data” in public AI systems as long as they have approval.

Excerpt from VicPD’s AF300 – Artificial Intelligence (AI) Security Policy.

That puts VicPD offside with the B.C. government’s official AI use policy, which says “Employees must not put any confidential information, including personal information, into publicly available gen AI tools like ChatGPT.” VicPD doesn’t explain when it might put sensitive data in something like ChatGPT, but they’ve left the door open, disregarding the one thing they say makes their internal AI “safe.”

For any use of AI, whether public or internal, VicPD has also given itself an out if something goes wrong. VicPD says employees are “accountable for any issues arising from their elective use of Gen AI as part of business processes, including, but not limited to … sensitive data exposure, poor data quality, and bias or discrimination in outputs.”

While VicPD can say officers are liable if they act on false information provided by its internal AI, it’s VicPD that created the tool. And regardless of who VicPD thinks is responsible, it’s the public that will be hurt. If VicPD can’t trust its officers to read VicPD’s own policies without the help of an AI summary, why should we trust that those officers will know if the AI summary is accurate?

Compare VicPD’s statements about AI oversight to the recent story about Canada’s Immigration Department, which rejected someone’s permanent residence application based on AI errors. The Immigration Department said that even though it’s using AI to process immigration applications, humans are the ultimate decision makers. Maybe an individual will be held accountable for that mistake, but the organization’s use of AI still altered the course of someone’s life. VicPD’s AI may not be connected to the internet, and VicPD may say employees are accountable if they act on bad information, but that will provide little comfort to any victims of those mistakes.

Garbage in, garbage out in VicPD’s secret AI model

VicPD’s AI documents ask “How accurate is it?” Because it’s not connected to the internet, VicPD says, its AI can’t answer questions about news from the previous day. Or questions about individuals, because they “often require social media or public information sources, which are not part of our AI model.”

What is in VicPD’s AI model, then? VicPD says even most of its employees aren’t allowed to know, let alone the public:

“Knowledge and specific details about how the model has been trained and how it works must be kept strictly confidential, with access to such information being granted on a need-to-know basis.”

VicPD’s secrecy about its AI model raises questions about the advice it’s giving officers. We know that VicPD says their AI can “query internal data sources,” and we also know that VicPD’s policing is racist. If VicPD trained its AI using VicPD data, such as general occurrence reports, how is it interpreting VicPD’s disproportionate policing of BIPOC people?

Some police forces are using AI “to predict … where future crimes may occur” or “who is likely to commit an offence.” In 2025, Amnesty International UK said those systems “have been built with discriminatory data and serve only to supercharge racism.”

VicPD’s AI documents say “Biased data can lead to unfair or harmful outcomes,” and “Unbiased data must be used to produce fair predictions.” If VicPD trained its AI on VicPD data, which demonstrate systemic racism, why would its outputs be anything but? VicPD’s secrecy means we don’t know the type of advice its officers might be getting.

Personal information in VicPD’s AI system

VicPD says its AI technology includes “multiple LLM [large language models] available for end users” including “VicPD Custom LLM (policies, case files, image/video processing).” We know that VicPD’s AI is linked to VicPD policies, so this statement strongly implies that VicPD also linked its AI system to existing police files, images, and video, or that officers are inputting police files, images, and video into its AI system.

Police files include personal information. If you’ve ever been referenced in a VicPD police report, it’s possible your personal information was used to train its AI. And if VicPD didn’t train its AI on police reports, officers are apparently still welcome to ask the AI what it thinks about a report you’re mentioned in. VicPD says officers shouldn’t input police files into public AI programs, implying their use with VicPD’s internal AI is fine.

Excerpt from VicPD’s “AI Do’s and Don’ts” guidance document.

VicPD says they should not “deploy AI on personal or sensitive data without explicit consent or proper safeguards.” If VicPD has used anyone’s personal information in their internal AI system, whether to train their AI or to ask it specific questions, “explicit consent” is something we know they don’t have.

VicPD’s reference to image and video processing also raises the question of whether VicPD is using AI facial recognition technology to identify people in photos and video. In a document on “AI Definitions,” VicPD mentions facial recognition as one general use for AI.

VicPD’s history of privacy breaches

VicPD says it needs “regular user access monitoring” for its AI system, and to “Keep track of AI’s decisions and performance,” but they didn’t release any records suggesting that work is actually happening. And there’s good reason to think it won’t, given VicPD’s poor record of protecting people’s personal information.

B.C.’s Police Complaint Commissioner reports that at least 51 officers have committed misconduct by misusing police databases, including one VicPD officer. Despite the misuse of police databases in B.C., as of 2023, VicPD said “no audits [had] been conducted” of officer use of its PRIME database. This is the same organization that accidentally gave someone outside VicPD access to the entire police database, and where an officer decided to access my personal information after I filed an FOI.

If VicPD wouldn’t audit access to a police database they knew had been misused, why would they meaningfully monitor the use of their new AI system?

Conclusion

As with all things policing, reform is not the answer here. Even if VicPD did audit its officers’ use of AI, it wouldn’t address the issue of whether police should use AI at all, including to write police reports and to interpret policies and the law, which could have immense consequences when the program gets something wrong.

As part of this FOI, I asked for lots of records about VicPD’s AI system that it didn’t provide. There was no proposal to create the system, no briefing note, and no business case. VicPD couldn’t find any meeting notes related to the project. And despite their pledge to track the use of the system, they didn’t provide any reports on the use of the AI system.

Nor did VicPD release any examples of records generated by its AI system. VicPD said those records aren’t retained, even though internally, VicPD told its staff that previous queries remain available to individual users.

VicPD’s use of technology should be severely restricted. Instead, it’s expanding unchecked, burning money as the city cuts programs that benefit the public. VicPD was so desperate to make sure its officers could use AI to write reports and interpret policy that it spent $24,000 to make its own system, without requesting council approval as part of last year’s budget. VicPD also recently spent over $100,000 on drones, most of which it didn’t put before council, either. And they want to buy bodycams, which will cost millions and increase police surveillance.

VicPD’s internal AI documents say “AI is not Skynet or a self-aware intelligence that will destroy humans,” but it’s hard to take that advice seriously when it may as well be coming from Cyberdyne Systems.

Excerpt from VicPD’s “AI Definitions” guidance document.

VicPD regularly hurts people, and there’s no reason to expect its use of AI will be any different, from potential privacy violations to false legal interpretations that could have devastating consequences.

FOI records released by VicPD